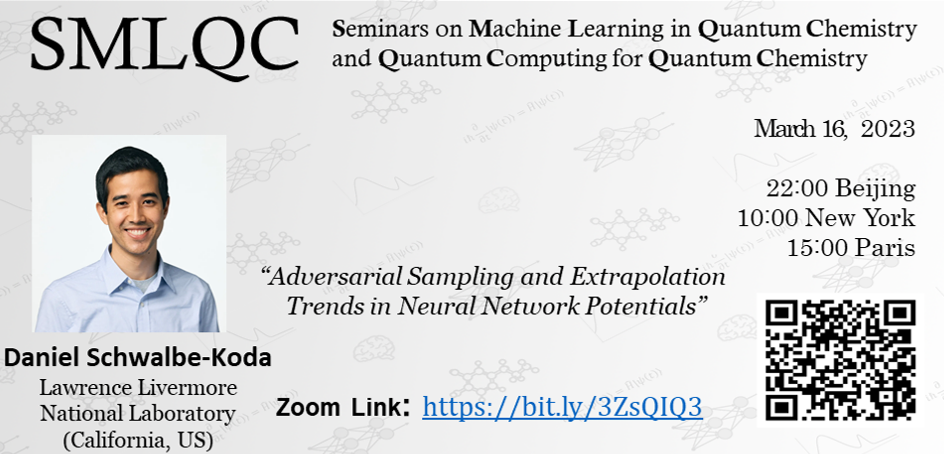

The 4th SMLQC seminar will be given by Daniel Schwalbe-Koda on March 16, 2023 (15:00 Paris | 22:00 Beijing | 10:00 New York).

Title

Adversarial Sampling and Extrapolation Trends in Neural Network Potentials

Abstract

In the last few years, several neural network interatomic potentials (NNIPs) have been proposed to improve model accuracy in a variety of benchmarks. Despite these advances, NNIPs still struggle to generalize outside of its training domain. In this talk, I will describe how neural network generalization can be improved with adversarial sampling and analyzed using loss landscapes. First, I will show how NNIP generalization can be improved by combining uncertainty quantification, active learning, and adversarial attacks. This differentiable sampling strategy leads to an increase in model robustness in production simulations despite using fewer data points than traditional active learning strategies. Furthermore, I will show how extrapolation trends can be derived from loss landscapes of NNIPs, with sharper loss landscapes relating to lower robust generalization. On the other hand, some training routines are shown to improve the loss landscape and generalization ability of the models. This work provides deep learning-based justifications for NNIP extrapolation and can inform the development of next-generation NNIPs. Prepared by LLNL under Contract DE-AC52-07NA27344.

Introduction to the speaker

Daniel Schwalbe-Koda is a Lawrence Fellow at the Lawrence Livermore National Laboratory (California, US), where he leads a project for accelerating materials discovery using high-performance computing and machine learning. He obtained a PhD in Materials Science and Engineering from MIT in 2022.